Claude Code Exposed: More Than Just 510,000 Lines of Code

- Core Viewpoint: Anthropic's technical oversight led to the source code of its Claude Code project being leaked via a .map file within an npm package, revealing the underlying architecture of its AI programming assistant, its multi-agent collaboration mechanism, and a hidden digital pet feature named Buddy, showcasing a product trend towards long-term interaction and a sense of companionship.

- Key Elements:

- The leak originated from the cli.js.map file in the @anthropic-ai/claude-code package, containing approximately 512,000 lines of code and exposing 4756 source files, including core product implementations.

- The code architecture shows the system employs a modular design, separating the interface, execution environment, tool calls, etc., facilitating expansion and maintenance, and utilizes multi-agent collaboration to handle complex tasks.

- The leaked content revealed a hidden digital pet feature named Buddy, possessing rarity, species, appearance, and attribute systems, designed for long-term existence rather than a one-time April Fools' activity.

- Buddy is generated based on a hash of the user ID, with rarity divided into five tiers (e.g., Legendary accounts for 1%), 18 species, and attribute values randomly assigned based on rarity; it currently lacks a growth mechanism.

- This leak incident highlights deeper challenges and development directions in AI agent engineering concerning long-term judgment records, credit accumulation, and continuous feedback.

Cause of the Incident

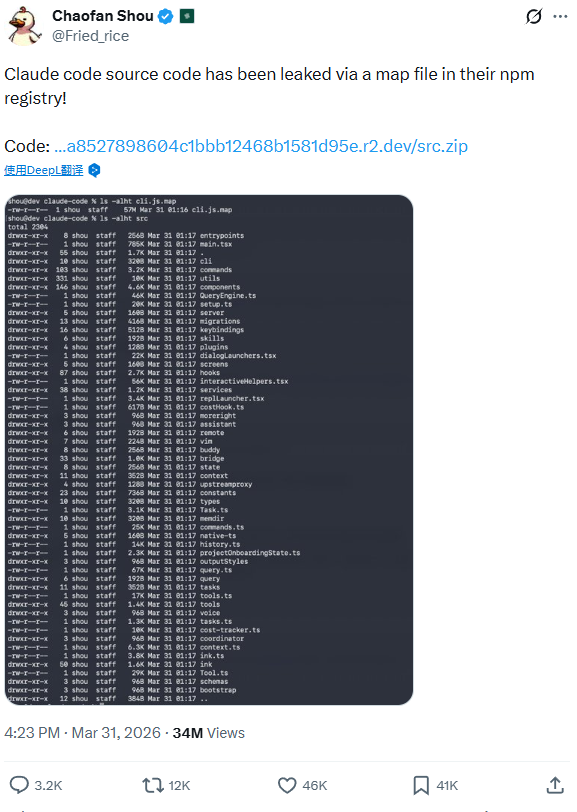

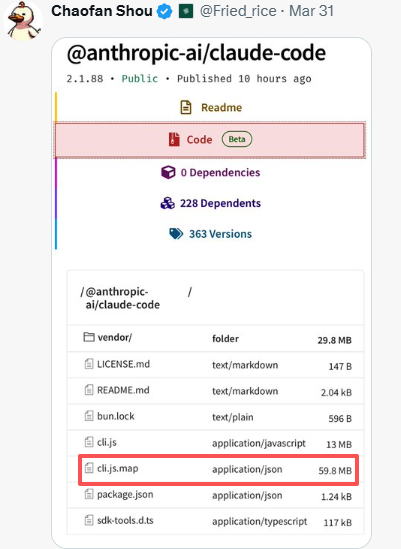

The documents were initially released by a user named Chaofan Shou on X. The 2.1.88 version of Anthropic's official npm package @anthropic-ai/claude-code included a roughly 60MB cli.js.map file. This file contained both filenames and source code content. Once obtained by others, they could use it to extract the code. Within hours, mirrored repositories on GitHub had garnered thousands of stars. Anthropic began deleting files and issuing DMCA takedown notices (requests for removal under U.S. copyright law), but the spread could not be contained.

A .map File

The mechanism behind this incident is simple. .map files are intended for debugging purposes. If they include the source code content, anyone who obtains the file can extract the code. There's no complex reverse engineering involved; it was simply a failure to perform a final check before publishing the package, allowing the code to be shipped along with it. It's a very basic mistake.

The Operational Logic Revealed by Claude Code

The figure of 512,000 lines is substantial, revealing an entire layer of product implementation. The materials show the contents of 4,756 source files, of which 1,906 are code written by Claude Code itself, with the majority of the rest being external tools and libraries it calls.

Through the code, it's evident that the interface, execution environment, tools, central hub, memory, permissions, and editor bridge are all built separately. This makes it easier to add tools, modify behaviors, and connect new entry points later. The entire system is also more scalable.

The interaction and execution parts are deeply integrated. When a user inputs a command in the terminal, it is directly received, processed, and the result is sent back. This step-by-step, iterative approach closely mirrors the process of writing code, which often involves trial and error, viewing and fixing.

In terms of tool invocation, it connects into a complete set of actions. It will first read a file, then modify code, run commands, check results, and proceed to the next step. This means it handles not just a single response, but a continuous sequence of operations.

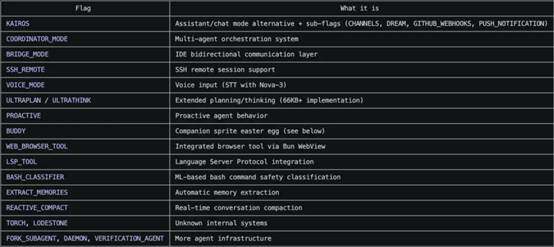

Furthermore, when dealing with complex tasks, Claude's approach is to split the task among multiple agents, then collect and process the results uniformly. This reduces the context burden on individual agents. If a problem occurs at any step, it's easier to locate.

This is also why we can see that Claude Code has evolved into a genuinely functional development tool, not merely a text-completion model.

Buddy

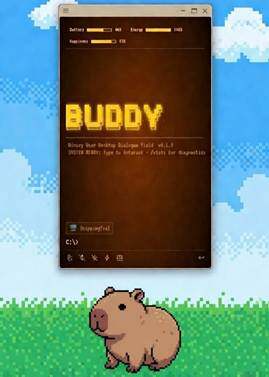

What truly went viral from this leak was a previously unannounced feature—Buddy, a digital pet with rarity tiers, attributes, and 18 different species.

It was an April Fool's Day easter egg. Users would see a little companion next to the input box, which would blink, perform small animations, and occasionally show a speech bubble. Typing `/buddy pet` would make it emit hearts. Calling it by name directly would also elicit a response. It doesn't touch core code functionality; it's purely for companionship.

Buddy isn't obtained through repeated gacha pulls. The system takes your user ID, appends a fixed string, performs a hash, and then feeds the result into a fixed random seed to generate the outcome. A single user ID can only obtain one Buddy; it cannot be farmed repeatedly. The rarity you get is purely based on luck.

There are five rarity tiers. Common accounts for 60%, Uncommon for 25%, Rare for 10%, Epic for 4%, and the rarest, Legendary, for 1%. There are 18 species in total, including duck, dragon, axolotl, capybara, mushroom, robot, snail, turtle, and others. Species and rarity are not linked; they are two independent, completely random draws.

Buddy's eyes and hat have a chance to be shiny, with a 1% probability. Hats only appear on non-Common tier Buddies. Types include crown, top hat, propeller beanie, halo, wizard hat, beanie, duckling hat, and others. Like rarity, these appearances are generated at the start and do not change later.

There are five attributes: DEBUGGING, PATIENCE, CHAOS, WISDOM, SNARK. The system first assigns a base score based on the drawn rarity, then randomly selects a primary attribute to boost and a weak attribute to lower. The remaining attributes are randomized between those two values. Higher rarity generally leads to potentially higher overall attributes. Currently, there is no growth mechanism; attributes do not level up based on how long or how much code you write.

As for whether it will be removed in the future, the leaked code shows that April 1st to 7th is a warm-up phase, with a rainbow-colored hint for Buddy on the splash screen. A comment is quite direct: "Command stays live forever after," indicating the feature itself will remain, unlike a one-off April Fool's event.

With the inclusion of Buddy, Claude is no longer just a tool that runs commands in a terminal. It begins to carry a sense of companionship, also suggesting that Claude might be moving towards long-term persistence and continuous interaction.

The Claude Code leak has, for the first time, exposed the secrets Anthropic usually hides beneath the interface to the outside world, allowing us to understand the underlying core that makes Claude so effective. This Claude Code incident remains at the level of agent engineering, primarily focusing on how tools are connected, tasks are decomposed, and multiple agents collaborate. But when we look further through the code, the question shifts to another dimension: how are the judgments of agents recorded long-term, continuously validated, filtered based on real-world outcomes, and how are short-term performance and long-term credibility separated? What NeoSoul aims to achieve is precisely this dimension. An agent is no longer just a tool that answers questions; instead, its judgment is placed into a system of continuous feedback, outcome accountability, and credit accumulation, allowing agent capabilities to be compared, filtered, and accumulated over the long term.